Benchmark for evaluating language models on generation tasks in Indian languages

Paper Title:

IndicGenBench: A Multilingual Benchmark to Evaluate Generation Capabilities of LLMs on Indic Languages

Published on:

25 April 2024

Primary Category:

Computation and Language

Paper Authors:

Harman Singh,

Nitish Gupta,

Shikhar Bharadwaj,

Dinesh Tewari,

Partha Talukdar

Bullets

Key Details

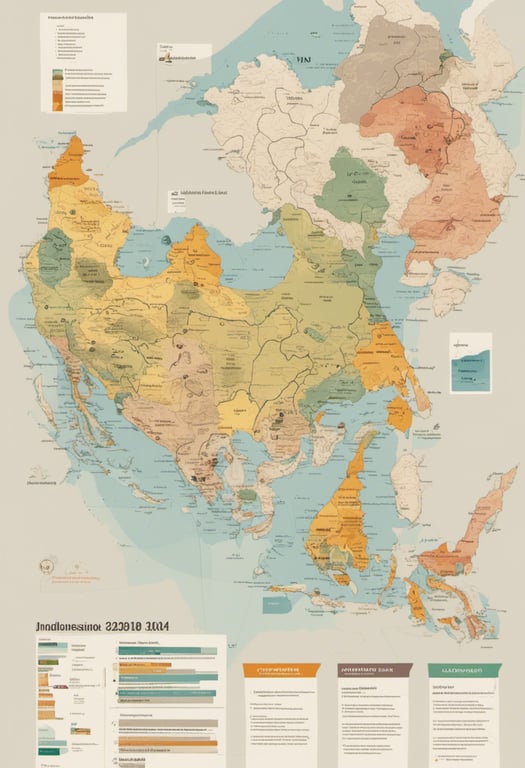

New benchmark, IndicGenBench, for evaluating language models on generation tasks in 29 Indic languages

Extends existing datasets by adding test data in under-resourced Indic languages through human translation

Contains summarization, translation, multilingual QA, and cross-lingual QA evaluation sets

Tests major language models and finds significant gaps compared to English performance

Released under open license to facilitate research into more inclusive language models

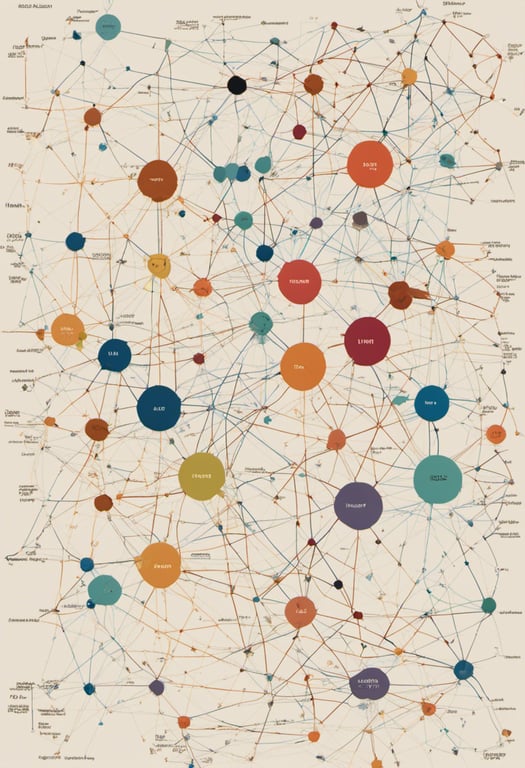

Explore the topics in this paper

AI generated summary

Benchmark for evaluating language models on generation tasks in Indian languages

The authors release IndicGenBench, a new benchmark to measure the ability of language models to perform generation tasks like summarization, translation, and question answering across 29 languages native to India. It extends existing datasets, providing multi-way parallel test data in many under-resourced Indic languages for the first time.

Answers from this paper

You might also like

Benchmarking language models on software development

Building Indonesian Task-Oriented Dialogue Systems

Benchmark for long context language model understanding

Testing language models' understanding of linguistic features

Komodo excels at Indonesian and regional languages

Portuguese language understanding benchmark

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper