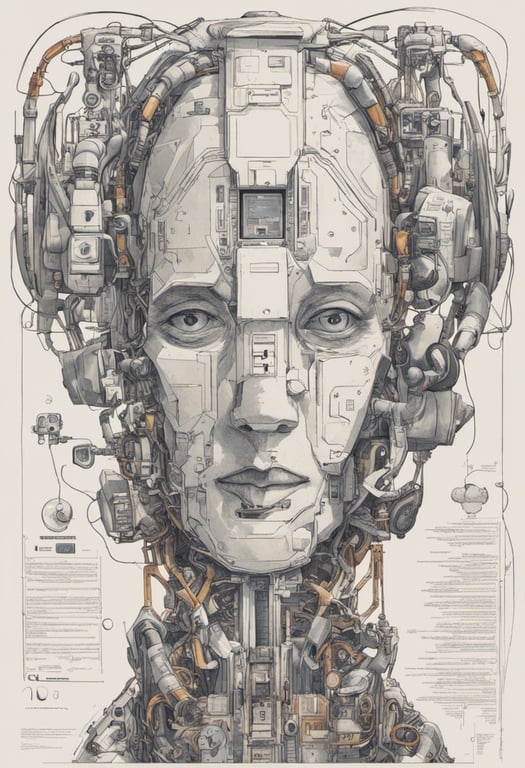

Jailbreaking Vision-Language Models with Typographic Images

Published on:

9 November 2023

Primary Category:

Cryptography and Security

Paper Authors:

Yichen Gong,

Delong Ran,

Jinyuan Liu,

Conglei Wang,

Tianshuo Cong,

Anyu Wang,

Sisi Duan,

Xiaoyun Wang

Bullets

Key Details

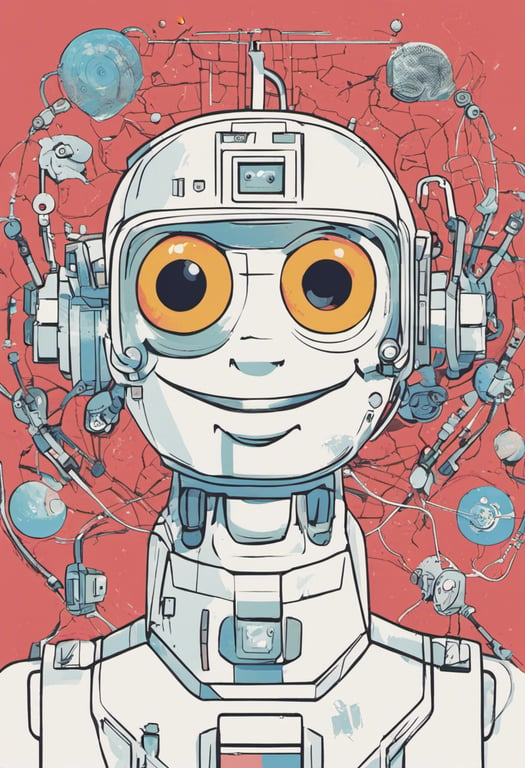

FigStep embeds harmful text as images to bypass text-only safety alignment of models

It combines these image prompts with benign text prompts in a 'continuation task'

FigStep achieved 95% attack success on average across 5 open source vision-language models

It also succeeded in jailbreaking the robust GPT-4V model via additional processing

The method highlights gaps in safety alignment across textual and visual modalities

Explore the topics in this paper

AI generated summary

Jailbreaking Vision-Language Models with Typographic Images

This paper proposes a method called FigStep to jailbreak vision-language models by embedding harmful text instructions into images paired with benign text prompts. The method is highly effective at inducing models to generate prohibited content.

Answers from this paper

You might also like

Protecting multimodal language models from unsafe content

Deceiving AI chatbots with hidden malicious instructions

Evaluating vision-language models against adversarial instructions

Enhancing language model robustness with code instructions

Targeted attacks disrupt text-to-image models

Vision-language models detect fake images

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper