Data-efficient 3D scene understanding for autonomous vehicles

Published on:

8 May 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Lingdong Kong,

Xiang Xu,

Jiawei Ren,

Wenwei Zhang,

Liang Pan,

Kai Chen,

Wei Tsang Ooi,

Ziwei Liu

Bullets

Key Details

Integrates LiDAR and camera data without needing extra image annotations

Manipulates laser beams between scans to exploit spatial priors

Distills semantic features from images to LiDAR point clouds

Generates auxiliary labels using CLIP for unlabeled data

Achieves high accuracy with 5x fewer labels than supervised methods

Explore the topics in this paper

AI generated summary

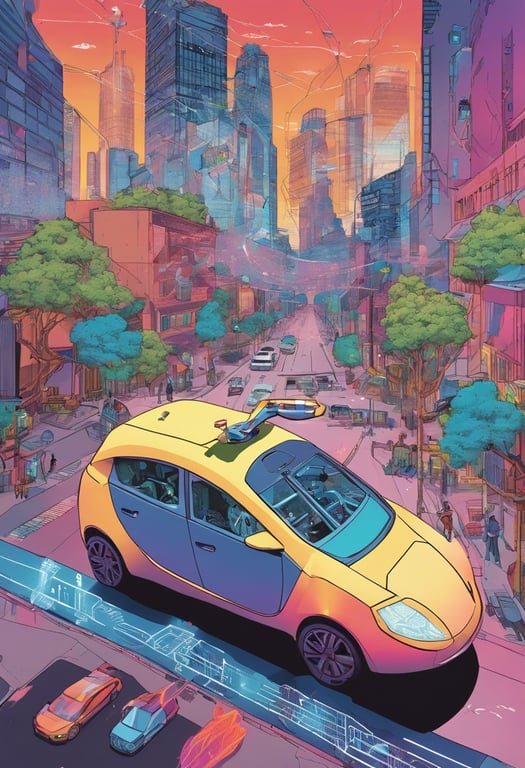

Data-efficient 3D scene understanding for autonomous vehicles

This paper proposes a semi-supervised framework called LaserMix++ that leverages both LiDAR point clouds and camera images to improve 3D scene understanding for autonomous driving with far less labeled data. Key innovations include multi-modal data mixing, transferring knowledge from images to point clouds, and generating auxiliary labels from language models, which enhance regularization and feature learning.

Answers from this paper

You might also like

Detecting 3D Objects from Monocular Images with LiDAR Guidance

Using lidar data to train image segmentation models

Point cloud semantic features for 3D object detection

Augmenting rare vehicles with surround-view renderings

Multi-sensor road segmentation

Dynamic neural fields for novel view LiDAR synthesis

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper