Understanding and Improving Models with Vision-Language Surrogates

Paper Title:

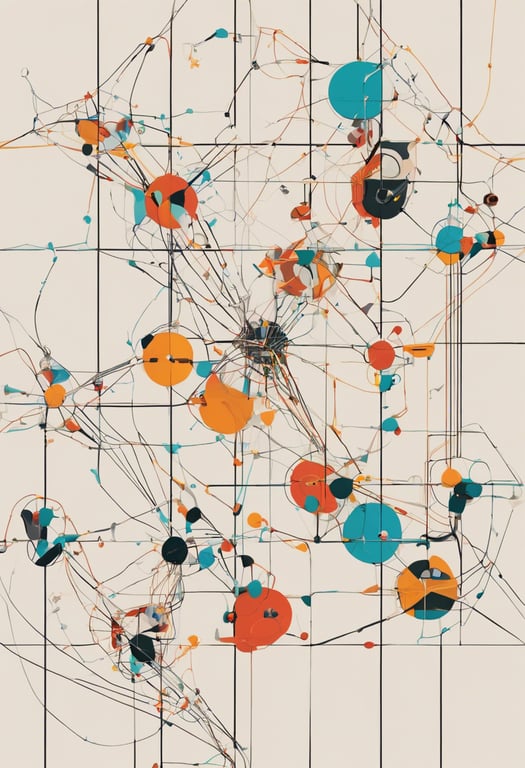

InterVLS: Interactive Model Understanding and Improvement with Vision-Language Surrogates

Published on:

6 November 2023

Primary Category:

Artificial Intelligence

Paper Authors:

Jinbin Huang,

Wenbin He,

Liang Gou,

Liu Ren,

Chris Bryan

Bullets

Key Details

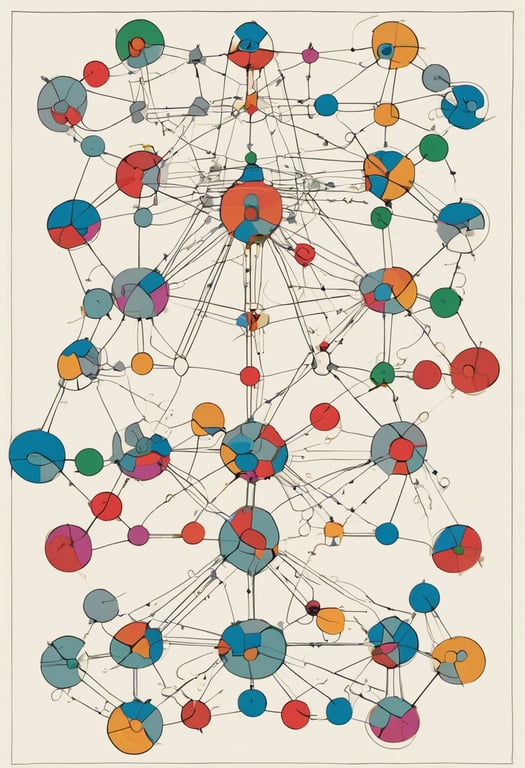

Discovers text-aligned concepts in images using vision-language model CLIP-S4

Creates model-agnostic explanations by training linear surrogates on concepts

Enables interactive model improvement by letting users tune concept influences

Evaluated in user study showing it helps gain insights and boost performance

Demonstrated improving multi-label image classification models in usage scenarios

Explore the topics in this paper

AI generated summary

Understanding and Improving Models with Vision-Language Surrogates

This paper presents a system called InterVLS that helps users understand and improve deep learning models. It discovers concepts in images using both vision and natural language. These concepts are used to create explanations that are model-agnostic, meaning they work on any model without needing its internal details. Users can adjust concept influences to improve model performance.

Answers from this paper

You might also like

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper