Localization-guided image editing via cross-attention refinement

Published on:

2 May 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Chuanming Tang,

Kai Wang,

Fei Yang,

Joost van de Weijer

Bullets

Key Details

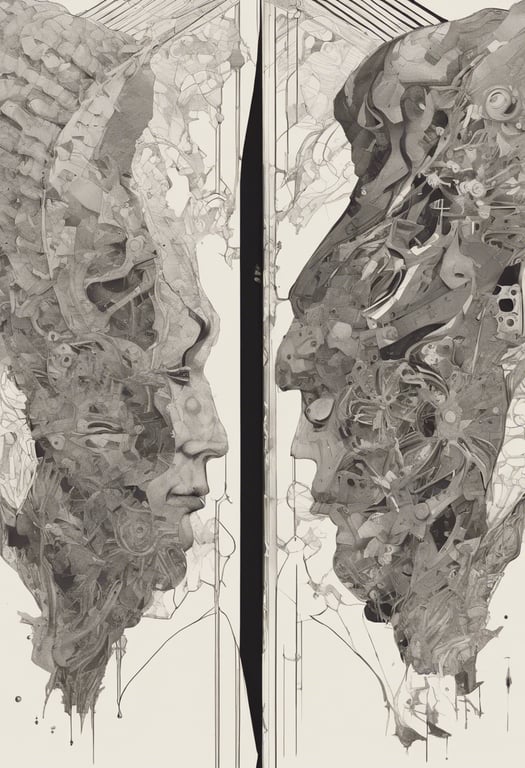

Uses localization priors like segmentation maps to guide cross-attention

Refines attention maps during diffusion model denoising phases

Enables precise editing of specific objects in image

Prevents unintended changes to unrelated regions

Evaluated on COCO images, shows quantitative and qualitative improvements

Explore the topics in this paper

AI generated summary

Localization-guided image editing via cross-attention refinement

This paper proposes a technique called Localization-aware Inversion (LocInv) that uses segmentation maps or bounding boxes to refine cross-attention maps in text-to-image models. This allows for more precise, fine-grained image editing focused on particular objects, while preventing unintended changes to other regions.

Answers from this paper

You might also like

Text-guided referring image segmentation

Finetuning text-guided inpainting for image composition

Self-supervised learning for x-ray image analysis

Inverting and reassembling images for flexible editing

Balancing text guidance in image generation

Improving vision-language alignment via automatic tag parsing

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper