Generating 3D Scenes with Depth Inpainting

Published on:

30 April 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Paul Engstler,

Andrea Vedaldi,

Iro Laina,

Christian Rupprecht

Bullets

Key Details

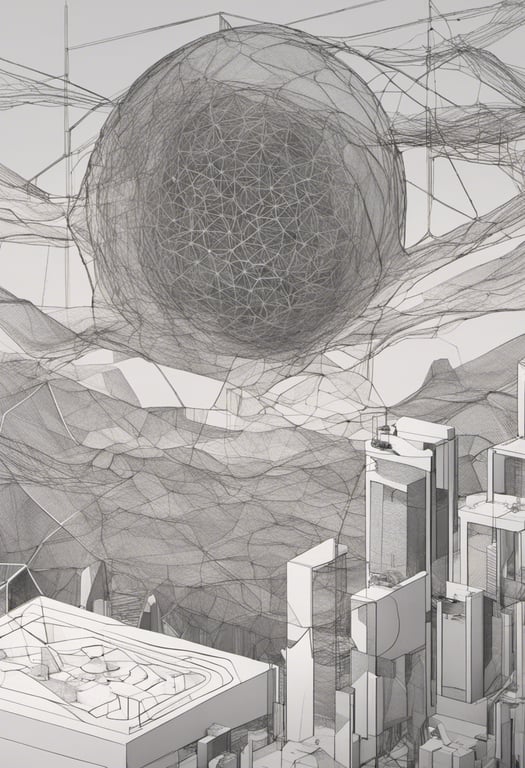

Proposes conditional depth completion model for scene generation

Self-supervised training scheme using teacher distillation

Evaluates depth predictions against ground truth geometry

State-of-the-art depth consistency on ScanNet and Hypersim datasets

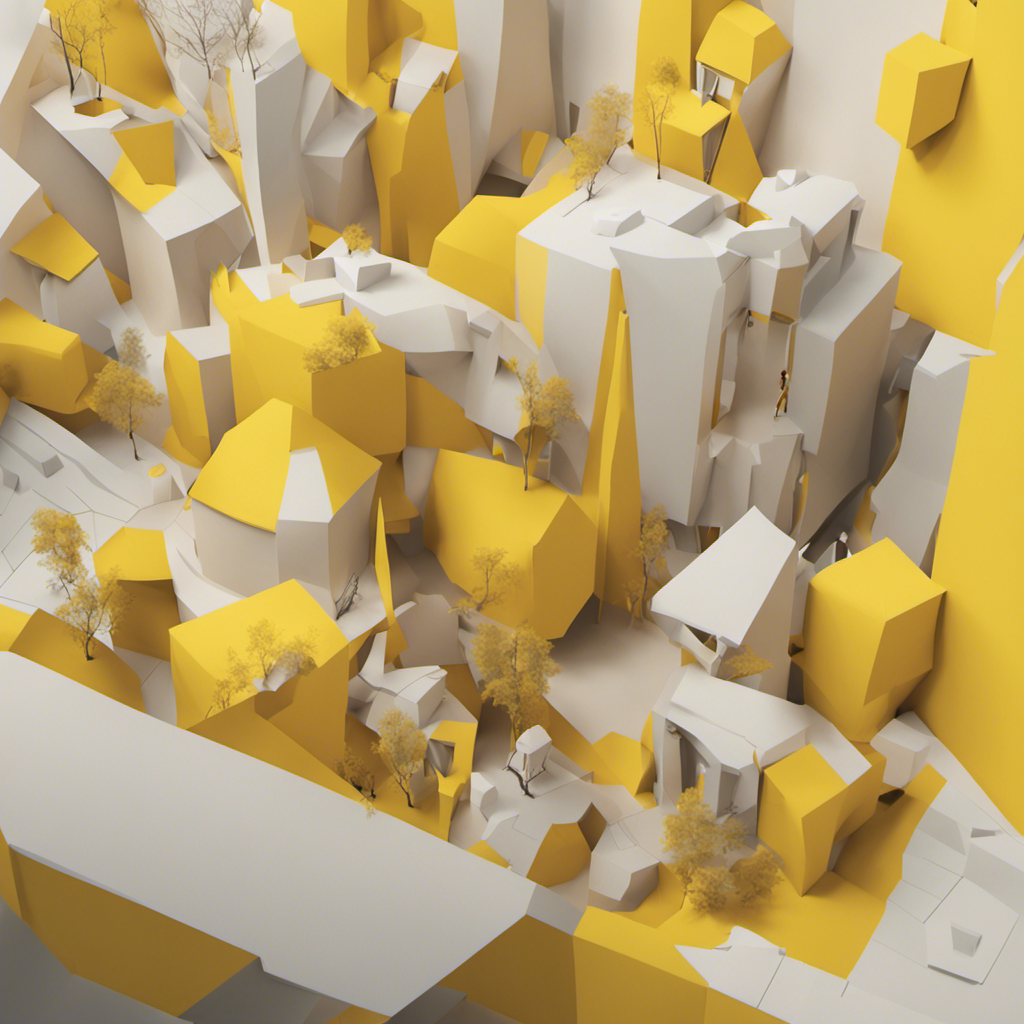

Showcases approach by generating high-quality 360 degree scenes

Explore the topics in this paper

AI generated summary

Generating 3D Scenes with Depth Inpainting

This paper introduces two key innovations for generating 3D scenes from images. First, it develops a depth completion model to extrapolate missing depth values by conditioning on the existing scene geometry. This results in improved coherence compared to off-the-shelf depth estimators. Second, it provides a new benchmark to evaluate scene generation methods based on ground truth depth maps rather than image similarity metrics alone.

Answers from this paper

You might also like

Virtual stereo matching for robust depth completion

Text-driven 3D scene synthesis

Depth: A Guide to Monocular Depth Estimation Using Self-Supervision

Generating 3D Shapes from Images via Multi-View RGB-D Modeling

Using simulations and AI for monocular depth data

Guiding unsupervised segmentation with depth and sampling

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper