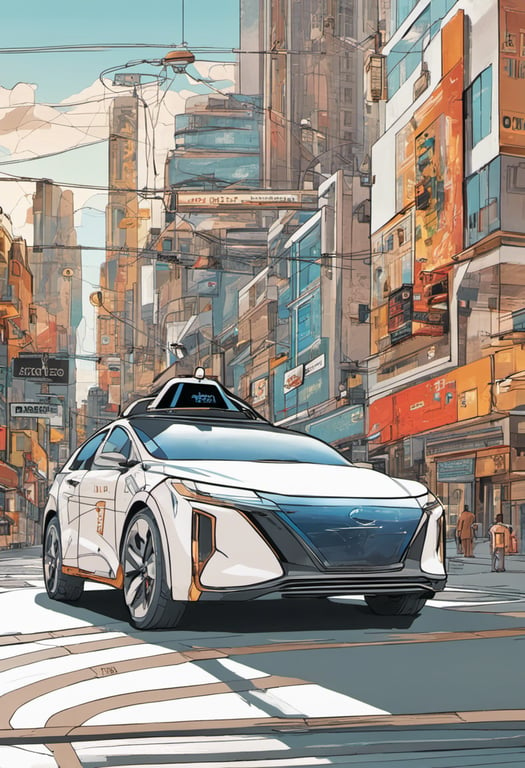

3D reasoning for autonomous driving

Paper Title:

OmniDrive: A Holistic LLM-Agent Framework for Autonomous Driving with 3D Perception, Reasoning and Planning

Published on:

2 May 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Shihao Wang,

Zhiding Yu,

Xiaohui Jiang,

Shiyi Lan,

Min Shi,

Nadine Chang,

Jan Kautz,

Ying Li,

Jose M. Alvarez

Bullets

Key Details

Proposes OmniDrive, a new 3D reasoning framework for autonomous driving

Features a 3D multimodal language model using sparse queries

Enables aligning perception and planning in 3D space

Introduces benchmark with visual QA for 3D reasoning

Includes counterfactual trajectory analysis

Demonstrates model's strong planning abilities

Explore the topics in this paper

AI generated summary

3D reasoning for autonomous driving

This paper proposes a new framework called OmniDrive to improve autonomous vehicles' 3D situational awareness and planning abilities. It features a novel 3D multimodal language model architecture that leverages sparse queries to encode visual data into a condensed representation before feeding it to a language model. This allows jointly modeling dynamic objects and map elements to align perception and planning. The paper also introduces a more challenging benchmark, OmniDrive-nuScenes, with visual question answering tasks covering scene description, traffic rules, 3D grounding, counterfactual trajectory reasoning, decision making, and planning.

Answers from this paper

You might also like

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper