Language-guided robot control for surgical tasks

Published on:

8 May 2024

Primary Category:

Robotics

Paper Authors:

Masoud Moghani,

Lars Doorenbos,

William Chung-Ho Panitch,

Sean Huver,

Mahdi Azizian,

Ken Goldberg,

Animesh Garg

Bullets

Key Details

Incorporates LLMs for surgical robot planning and control

Enables automation without learning from examples/primitives

Uses perception modules to ground objects

Has re-planning and human oversight for safety

Shown to work on multiple surgical tasks in simulation & physically

Explore the topics in this paper

AI generated summary

Language-guided robot control for surgical tasks

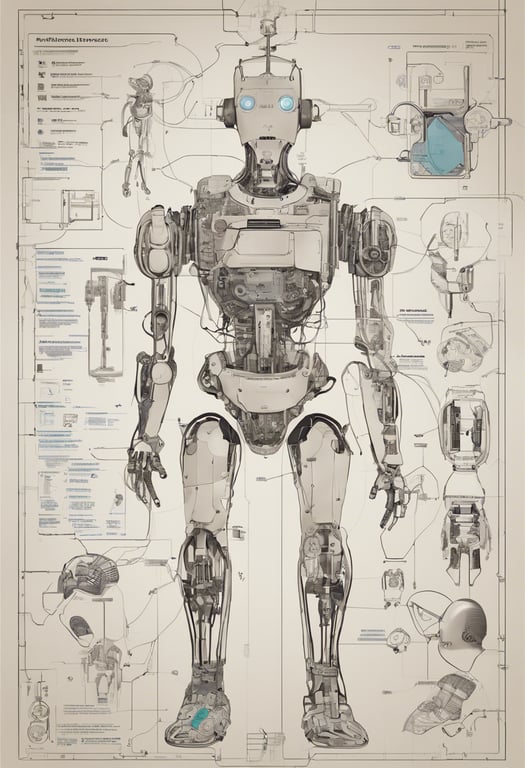

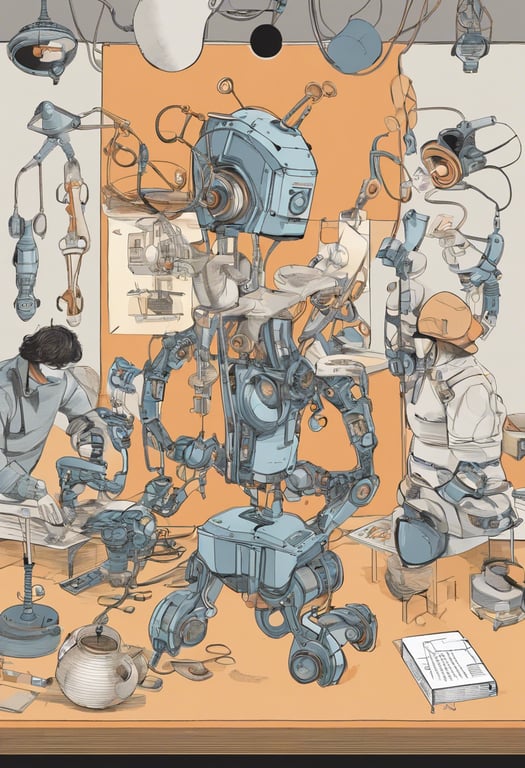

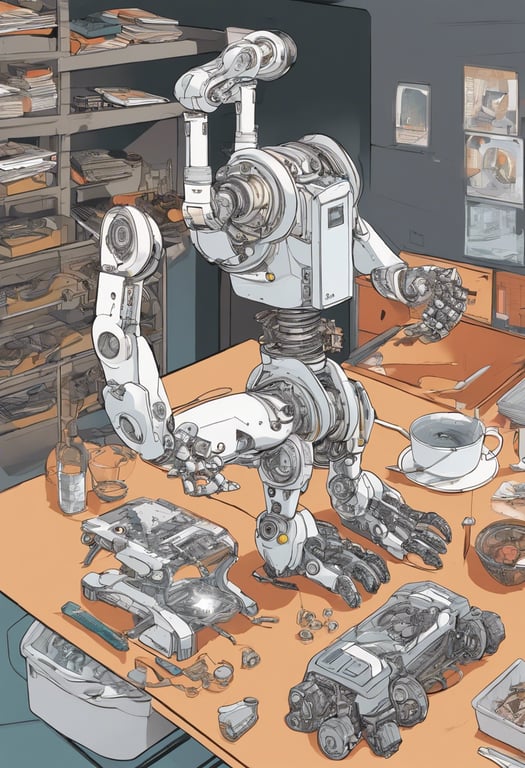

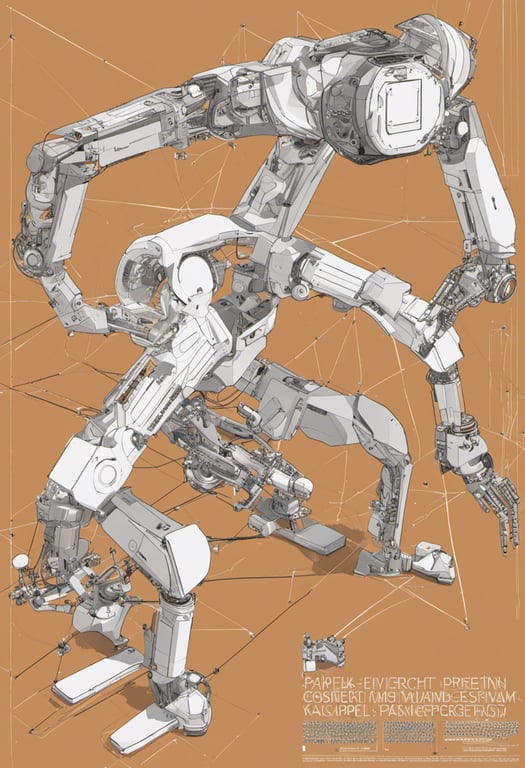

This paper presents SuFIA, a framework that uses large language models (LLMs) and perception modules to plan and execute robotic control for surgical sub-tasks. This allows for a learning-free approach to surgical automation without needing motion primitives or examples. SuFIA incorporates re-planning and human oversight to mitigate errors. Experiments in simulation and on a physical robot platform demonstrate SuFIA's ability to autonomously perform common surgical tasks under challenging conditions.

Answers from this paper

You might also like

Vision-language planning for robots

Using AI to Help Robots Plan Tasks and Handle Unexpected Situations

Reasoning for Robot Control with Language Models

Visual servoing for robot manipulation using vision and language models

Using Language Models for Robotic Manipulation

Interactive robot planning via questioning

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper