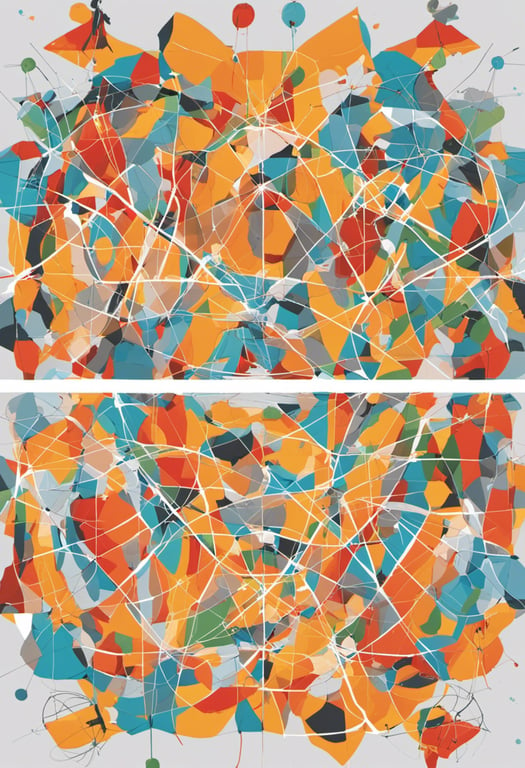

Extensive data augmentation for domain adaptation

Paper Title:

ECAP: Extensive Cut-and-Paste Augmentation for Unsupervised Domain Adaptive Semantic Segmentation

Published on:

6 March 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Erik Brorsson,

Knut Åkesson,

Lennart Svensson,

Kristofer Bengtsson

Bullets

Key Details

ECAP cut-and-pastes confident pseudo-labeled pixels onto training images

A memory bank stores target domain pseudo-labels during training

Sampling focuses on reliable pseudo-labels, reducing noise

Implemented on MIC model, ECAP advances state-of-the-art on Synthia->Cityscapes to 69.1 mIoU

Code is available at https://github.com/ErikBrorsson/ECAP

Explore the topics in this paper

AI generated summary

Extensive data augmentation for domain adaptation

This paper proposes a data augmentation strategy called ECAP to improve unsupervised domain adaptation for semantic segmentation. ECAP maintains a memory bank of target domain pseudo-labels over training. It selects the most confident pseudo-labels and cut-and-pastes them onto source domain images to augment the training data. This leverages reliable pseudo-labels and reduces the impact of erroneous ones. Implemented on the MIC model, ECAP reaches 69.1 mIoU on Synthia->Cityscapes, setting a new state-of-the-art.

Answers from this paper

You might also like

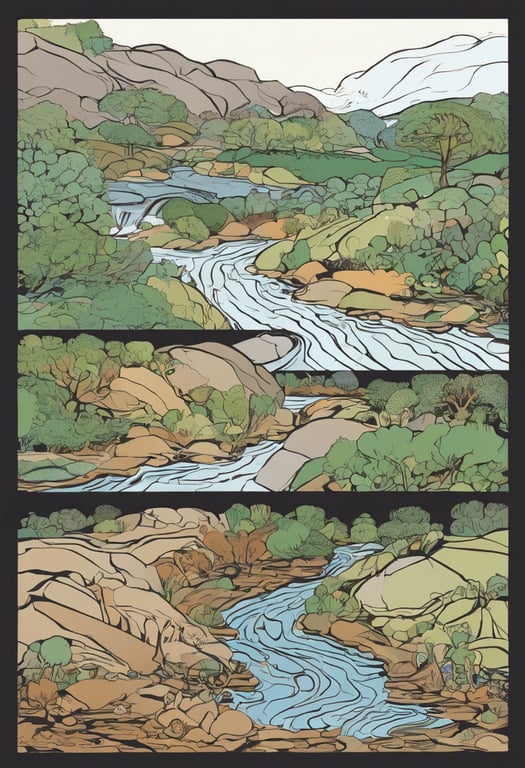

Self-supervised camouflage segmentation

Domain adaptation for object detection across visual domains

Dual-domain image fusion for remote sensing semantic segmentation

Hybrid learning for event camera semantic segmentation

Model adaptation via collaborative training

Backpropagation-free adaptation for 3D test-time learning

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper