Vision Transformer for Semantic Image Compression

Paper Title:

Transformer-Aided Semantic Communications

Published on:

2 May 2024

Primary Category:

Computer Vision and Pattern Recognition

Paper Authors:

Matin Mortaheb,

Erciyes Karakaya,

Mohammad A. Amir Khojastepour,

Sennur Ulukus

Bullets

Key Details

Employs vision transformers for semantic image compression under bandwidth constraints

Creates attention mask to prioritize critical image segments for transmission

Encodes image parts according to semantic information content

Optimizes bandwidth usage while preserving semantics

Evaluation shows preserved semantics even with high compression rates

Explore the topics in this paper

AI generated summary

Vision Transformer for Semantic Image Compression

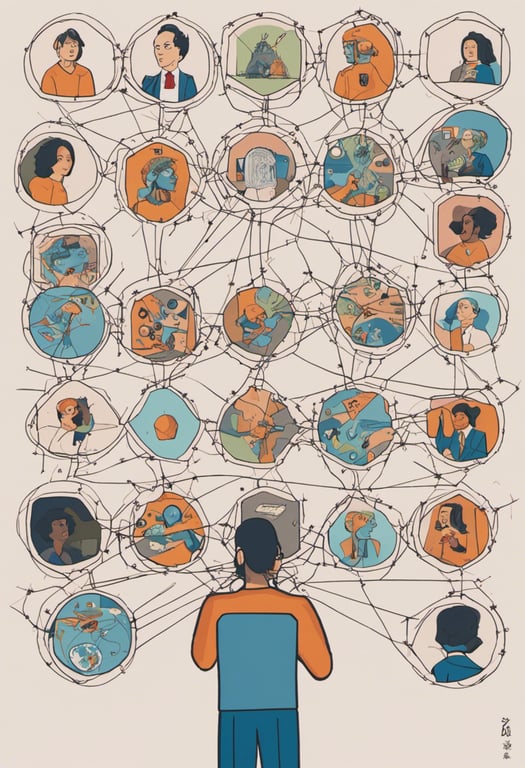

This paper proposes a novel framework for semantic image communication using vision transformers. It creates an attention mask to prioritize critical image segments for transmission, ensuring reconstruction focuses on key objects. This significantly improves semantic communication quality and bandwidth efficiency by encoding parts proportional to semantic content. Evaluated on TinyImageNet, it succeeds in preserving semantics even when transmitting a fraction of encoded data.

Answers from this paper

You might also like

Efficient image transmission through neural networks

Efficient image communication for AIoT using deep semantic segmentation and restoration

Efficient vision transformers for semantic segmentation

Lightweight clustering for semantic segmentation

Efficient semantic segmentation with a single CNN

Semantic face image generation preserving identity

Comments

No comments yet, be the first to start the conversation...

Sign up to comment on this paper